The Adoption-Value Gap: The State of AI in RevOps

- Foreword:

- RevOps Has Entered the AI Era

- Research Highlights

- Adoption and Where Teams Are Starting

- The Foundational Blocker — Data Quality

- ROI and the Measurement Gap

- Where AI Is Trusted (And Where It’s Not)

- The Hidden Cost: GTM Knowledge Trapped in People’s Heads

- Investment, Infrastructure, and the Road Ahead

- What Comes Next for RevOps Leaders

- Methodology and Demographics

In early 2025, we had the idea for a "State of AI in GTM" report, based on a trend we were seeing in the market. The people consistently asking about our most ahead-of-the-market use cases fell into one of two buckets:

- Forward-thinking sales leaders who understood the potential of buyer journey + sales touchpoint data and what it could mean for their orgs.

- Revenue Operations leaders who deeply felt the pain of disconnected systems. They would speak of AI chatbots tacked on top of broken infrastructure, poor adoption of expensive AI tools, and a clear perspective on where the market for AI in go-to-market needed to go.

In my initial research, the latter stood out to me. The sales leaders we spoke to were excited about what AI could do. The RevOps leaders were excited too, but they were also the ones left holding the bag when the implementation didn't work.

They were living with the gap between what AI promised and what it actually delivered inside their stack every day. And they had strong opinions about why.

That changed the focus of this project. RevOps was going through a fundamental shift from "owner of tooling and GTM data" to the function responsible for evaluating, pressure-testing, and ultimately operationalizing whatever AI tooling the rest of the org wants to adopt. If they were simultaneously both the most bought-in and the most frustrated, their perspective felt like the one worth understanding first.

So we started by asking a simple question across hundreds of people over the course of the year: who is actually at the forefront of AI adoption in GTM?

We asked Sales Leaders, Marketing Leaders, RevOps, and even analytics teams to keep the perspective honest. But the answer kept coming back to the same undeniable conclusion: somewhere between 50% and 80% of AI adoption in GTM is led or championed by RevOps.

This report is for them, and for the rest of go-to-market in need of a reality check for where to go from here.

There’s real optimism in this data, and there’s also real candor about what’s falling short.

The practitioners in this space are thoughtful, rigorous, and quietly building the operational backbone that AI in GTM will run on. We made this report because we wanted to understand their world better, and because doing that helps us build something better too.

We hope it’s useful.

RevOps Has Entered the AI Era

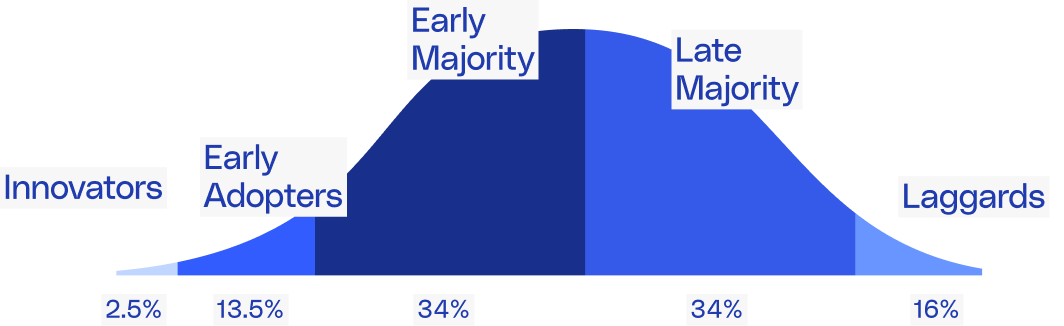

The adoption curve in RevOps is essentially complete. Every respondent in our survey is using AI or plans to within 12 months. Not a single organization reported "no plans." That level of unanimity is rare for any technology category.

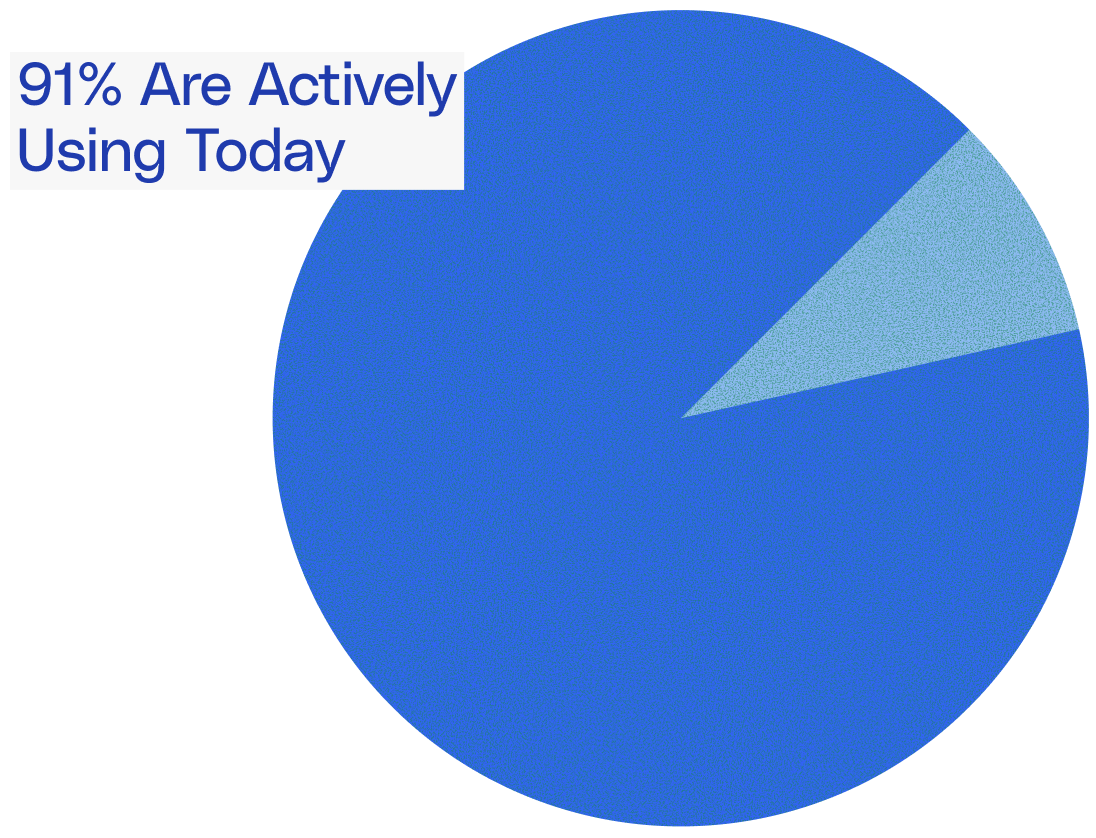

But full adoption hasn't produced full satisfaction. 91% of teams are actively using AI today, yet average satisfaction with current tools sits at just 3.9 out of 7.

The gap between those two numbers is the story of this report.

What follows is what RevOps teams are actually doing with AI, where it's falling short, and what needs to happen before AI can deliver on its real promise.

Adoption and Where

Teams Are Starting

Across our dataset, not a single organization reported having no plans to implement AI. Half have already rolled it out widely, 41% are running pilots, and the remaining 9% expect to be live within 12 months.

That's a 100% intent rate, which is unusual for any technology category at this stage.

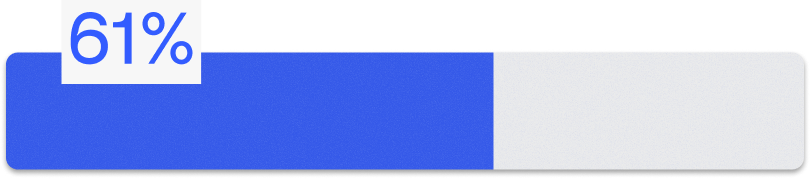

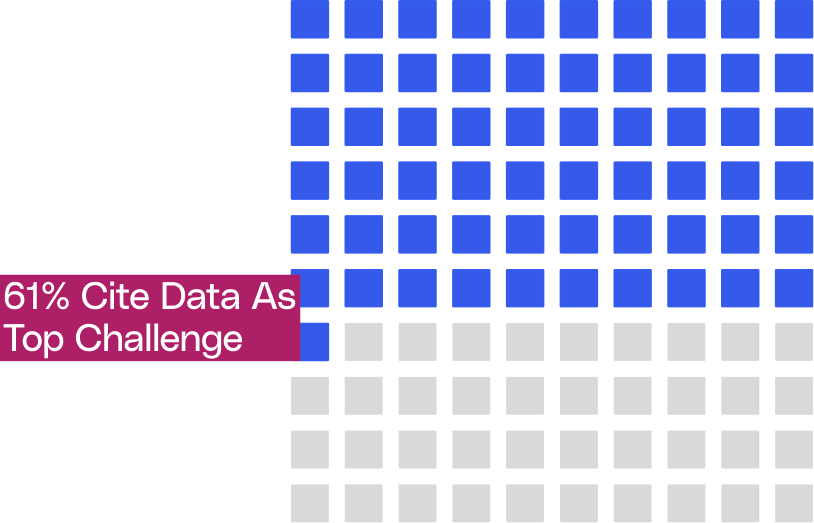

But the data hides a more interesting story than the adoption numbers themselves. When sharing adoption practices, the most common entry points are process automation and data quality, each at 61%.

RevOps teams are gravitating toward foundational, operational work first. They're using AI to clean data, automate repetitive processes, and score leads. The more strategic applications, like customer segmentation and deal analysis, are seeing lower adoption so far.

That sequencing makes sense. You can't build reliable AI-driven insights on top of messy data or broken processes. Starting with the infrastructure layer is a rational choice, and most teams seem to recognize that intuitively.

The concern is what happens after the foundation is laid. Right now, a significant number of teams appear to be treating these foundational use cases as the destination rather than the starting point. Process automation and data cleanup are necessary first steps, but they're also the lowest-ceiling applications of AI in a revenue context. The real value unlock comes when teams move from "AI helps us keep our CRM clean" to "AI tells us which deals are at risk and why."

That transition from operational efficiency to strategic intelligence is where most of the field still needs to go. And as we'll see in the chapters ahead, several factors are making that jump harder than it should be.

The Foundational

Blocker — Data Quality

If adoption is no longer the problem, the obvious next question is what's holding teams back from getting more out of AI. The answer is overwhelmingly clear: data.

A significant 61% of respondents cite data quality and integration as their top challenge with AI in RevOps. That's nearly 1.5x the next closest barrier, change resistance, at 43%. Lack of internal AI skills (30%), high tool costs (26%), and security or trust concerns (26%) round out the list, but none come close to the data problem in terms of frequency.

As one (frustrated) respondent put it: "If you have garbage, there isn't much you can do with it even with AI." That sentiment showed up repeatedly in qualitative responses. Teams aren't struggling because AI tools lack capability. They're struggling because the data feeding those tools is fragmented, inconsistent, or incomplete. AI has a prerequisite problem, and

most organizations haven't fully solved it yet. The infrastructure conversation is evolving alongside this realization. CRM remains the center of gravity for most RevOps teams, but the data warehouse is gaining ground fast. A notable subset of respondents described CRM as increasingly just a user interface layer, with the warehouse becoming the actual source of truth underneath.

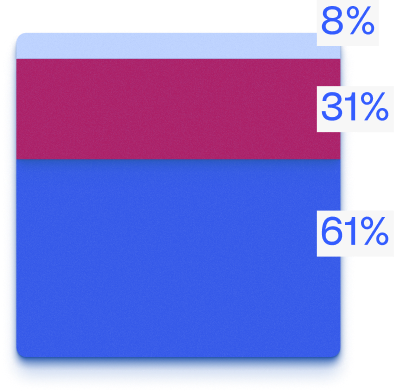

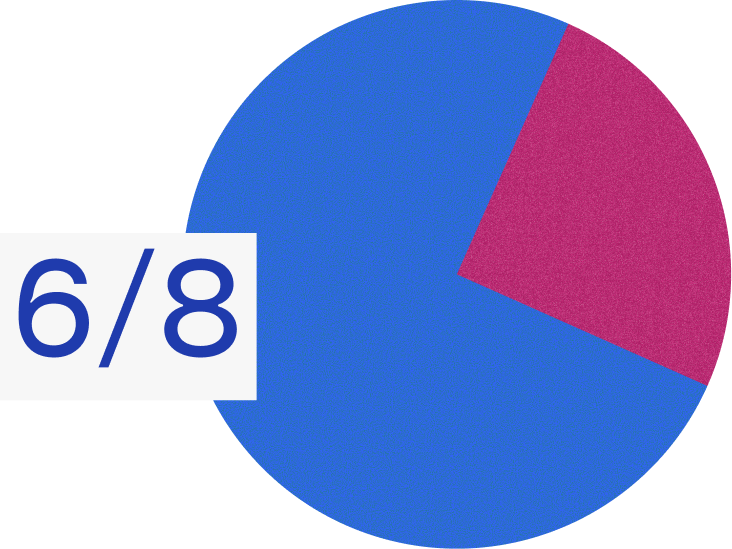

The field is still split on exactly how this plays out, but consensus is forming. When asked about the direction of their tech stack over the next few years, 62% expect an AI layer overlaid on top of consolidated systems. Another 31% expect outright consolidation into fewer tools. Only 8% anticipate adding more point solutions.

The implication is important.

The majority of RevOps leaders see the future as consolidation first, intelligence second. Get the data house in order, reduce the number of systems, and then layer AI on top. That's a meaningful shift from even a year ago, when the conversation was more focused on which new AI tools to buy. And — it raises an uncomfortable question for teams that are currently investing in AI without addressing their data foundation. If most of the field believes clean, consolidated data is the prerequisite for AI to work, teams that skip that step are likely to end up with the same middling results at a higher price point.

Then there's the satisfaction score. On a 7-point scale, average satisfaction with current AI tools sits at just 3.9. That's barely above the midpoint.

This creates a strange tension. If 70% of teams are seeing positive ROI, why is satisfaction so mediocre? A few explanations are possible. Teams may be measuring ROI loosely, relying on rough estimates of time saved rather than tracking business outcomes like pipeline velocity or win rates. The bar for "positive ROI" may simply be low when the primary metric is hours reclaimed. Or teams may sense that AI should be delivering more than it currently is, even if the math technically works out.

Whatever the explanation, the gap between reported ROI and actual satisfaction suggests the industry needs better measurement frameworks. "Hours saved" is the most accessible metric, but it's also the least ambitious one. It captures efficiency without capturing effectiveness. And until RevOps teams develop clearer ways to measure whether AI is improving revenue outcomes, not just speeding up tasks, satisfaction is likely to stay stuck in that lukewarm range.

This matters especially heading into the next budget cycle. As we'll cover later, AI budgets are growing almost universally. Leaders approving those budgets will eventually want more than "we saved a couple hours per rep" as justification.

ROI and the Measurement Gap

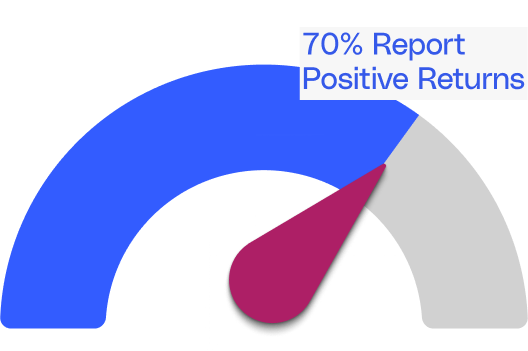

On the surface, the ROI picture looks…encouraging.

70% of respondents report positive or strong returns on their AI investments. The majority of those (65%) describe returns in the 1-2x range, with a small group (4%) reporting 2-3x. Another 13% are at breakeven, and just 4% report negative ROI.

But dig into the details and the story gets more complicated.

13% of respondents haven't measured ROI at all. And for the teams that have, the most commonly cited benefit is time savings, where the numbers are modest.

Those are real gains, and they add up across a sales org. But they're incremental. They suggest AI is shaving time off existing workflows rather than fundamentally changing how revenue teams operate.

For organizations spending significant budget on AI tooling, a couple hours of saved rep time per week may not feel like the transformation they were promised.

Then there's the satisfaction score, adding uncomfortable tension.

If 70% of teams are seeing positive ROI, why is satisfaction so mediocre?

The data points us in a few different directions.

- Teams may be measuring ROI loosely, relying on rough estimates of time saved rather than tracking business outcomes like pipeline velocity or win rates.

- The bar for "positive ROI" may simply be low when the primary metric is hours reclaimed.

- Or teams may sense that AI should be delivering more than it currently is, even if the math technically works out.

The gap between reported ROI and actual satisfaction suggests the industry needs better measurement frameworks. "Hours saved" is the most accessible metric, but it's also the least ambitious one. It captures efficiency without capturing effectiveness. And until RevOps teams develop clearer ways to measure whether AI is improving revenue outcomes, not just speeding up tasks, satisfaction is likely to stay stuck in that lukewarm range.

This matters especially heading into the next budget cycle. As we'll cover later, AI budgets are growing almost universally. Leaders approving those budgets will eventually want more than "we saved a couple hours per rep" as justification.

Where AI Is Trusted (And Where It’s Not)

Adoption is widespread. Use cases are expanding. But there's a clear line RevOps leaders are drawing around where AI is allowed to operate autonomously, and that line falls squarely at the point where human judgment enters the picture.

Across qualitative responses, pricing and deal desk decisions, forecast calls, context-dependent analysis, and strategic decisions were consistently flagged as off-limits for AI. Respondents specifically named pricing, forecasting, or nuance-heavy decisions as areas where they wouldn't trust AI to act without human oversight.

This trust boundary is intuitive. AI is welcomed for what feels like repetitive “low-value” data work: cleaning, enriching, scoring, surfacing patterns. It's kept at arm's length from relationship work and anything requiring contextual judgment.

tl;dr: A lead score is fine, but a pricing recommendation on a complex enterprise deal is not.

This tracks with where teams are actually deploying AI today (Chapter 1). Additionally, the AI use cases practitioners insist are “overratedI” reinforces this. Respondents pointed to autonomous SDR and outbound replacement, AI-powered data management and enrichment, email and messaging personalization, and conversational intelligence.

"I don't believe it's ready."

- One respondent's blunt take on autonomous outbound.

There’s a clear gap between where vendors are placing their bets, and where practitioners actually feel confident deploying AI. This trust boundary may not be permanent, but it does mean vendors building products that require high levels of trust are ahead of the market. The willingness to let AI handle judgment calls will likely grow, it has to be earned incrementally.

The Hidden Cost: GTM Knowledge Trapped in People’s Heads

Aside from the data we’ve discussed in previous sections, our research surfaced a problem that rarely makes it into vendor pitch decks: the sheer amount of critical go-to-market knowledge that lives in people's heads rather than in any system.

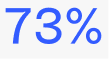

73% of respondents agreed that critical GTM context is stored in people, not tools, meaning they answer the same GTM questions weekly or more frequently.

Questions like "why did pipeline change this week" or "how does pricing work for this segment" are being answered manually and repeatedly across organizations.

This creates a persistent drag on productivity that compounds over time. Every time a knowledgeable team member leaves, the organization re-learns the same lessons from scratch. Every time a rep pings three people on Slack to understand what happened with an account, that's time and context lost.

What makes this finding especially relevant is that almost none of the current AI tooling in RevOps is designed to solve it. The field has invested heavily in AI for process automation, lead scoring, and data cleanup. But the highest-frequency pain point practitioners described in our research is a knowledge retrieval problem, not a process or data hygiene problem.

This is the invisible tax on RevOps productivity that AI hasn’t solved yet — and may be the highest-leverage opportunity in the space.

The biggest AI opportunity in RevOps isn’t automation, it’s institutional memory. The team that solves GTM knowledge retrieval wins

Investment, Infrastructure, and the Road Ahead

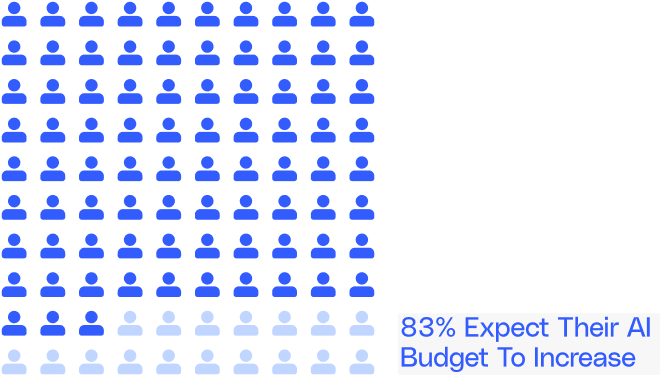

Despite middling satisfaction scores and unresolved data challenges, AI budgets are growing. A staggering 83% of respondents expect their AI investment to increase over the next year. 30% expect significant growth, 52% expect slight growth, and 17% expect budgets to hold flat.

Zero respondents expected budget cuts in AI investment.

That's a strong signal of organizational commitment, but it also raises a question.

If satisfaction sits at 3.9/7 and most teams are saving only 1-2 hours per rep per week, what's driving the continued spend?

The answer is likely a mix of competitive pressure, executive mandate, and a reasonable belief that the technology will improve faster than the current results suggest. Investment is running on conviction as much as evidence right now.

The barriers to getting more from that investment are familiar by this point in the report:

- Data quality remains the top blocker at 61%.

- Change resistance follows at 43%, which is worth underscoring. The people problem is real.

- Skill gaps (30%) and tool cost (26%) complete the picture, and together they point to a need that's easy to overlook: teams don't just need better software, they need enablement and internal buy-in to make it work.

The risk for organizations heading into the next budget cycle is straightforward. Adding more AI tools on top of a fragmented data foundation and a team that hasn't been brought along for the ride is a reliable way to replicate the current satisfaction gap at higher cost.

The teams that will get the most from increased budgets are the ones treating data infrastructure, measurement frameworks, and change management as prerequisites rather than parallel workstreams.

What Comes Next for RevOps Leaders

The data in this report tells a consistent story. AI adoption in RevOps is effectively universal, the returns are real but modest, and the gap between what teams are getting and what they expected is driven by foundational issues that more tooling alone won't fix.

Heading into the next budget cycle, three priorities stand out.

First, treat data infrastructure as the prerequisite. 61% of teams named data quality as their top blocker, and 62% expect the future tech stack to be an AI layer on top of consolidated systems. That order of operations matters. The AI layer works better when the foundation underneath it is solid.

Second, build a real ROI measurement framework before expanding investment. "Hours saved per rep" is a useful starting metric, but it's a floor. The gap between 70% positive ROI and 3.9/7 satisfaction suggests teams sense their current measurements aren't capturing the full picture. Better frameworks will lead to better investment decisions.

Third, focus AI on institutional knowledge. The GTM questions being re-answered every week across RevOps teams represent the kind of high-frequency, high-cost problem that AI is well suited to solve. It's also the problem almost no one is building for yet.

AI budgets are growing. The intent is there. What separates the teams that get real value from the ones that stay stuck at 3.9/7 satisfaction will come down to whether they invest in the prerequisites or skip straight to the next tool.

Methodology and Demographics

This report is based on survey responses collected from 315 go-to-market professionals across Sales, Revenue Operations, Sales Enablement, and Marketing functions.Date Surveyed: 30-day period, Jan 20th 2026 - Feb 19th 2026Company Size:

- Enterprise (1,000+ employees): 70%

- Mid-Market (200–999 employees): 30%

Seniority:

- C-Suite (CRO): 7%

- VP / SVP: 30%

- Director / Senior Director: 40%

- Manager / Associate Director: 23%

Job Function:

- Revenue Operations: 50%

- Sales Operations: 23%

- Marketing Operations: 27%

- Sales Leadership: 13%

Industry

- SaaS / Software: 87%

- IT Services: 10%

- Ecommerce: 3%