The State of Revenue Agents

This report is about inverting a model we have relied on for two decades of SaaS.

We conducted in-depth interviews across 50 senior revenue leaders across B2B software enterprise companies (1,000+ employees, 200+ reps) to understand how AI is actually being used in their organizations today.

The results led us to a singular conclusion: AI should run the operation, while your reps are used to run the plays that actually require a human: judgment, relationships, and the call on when to override the machine.

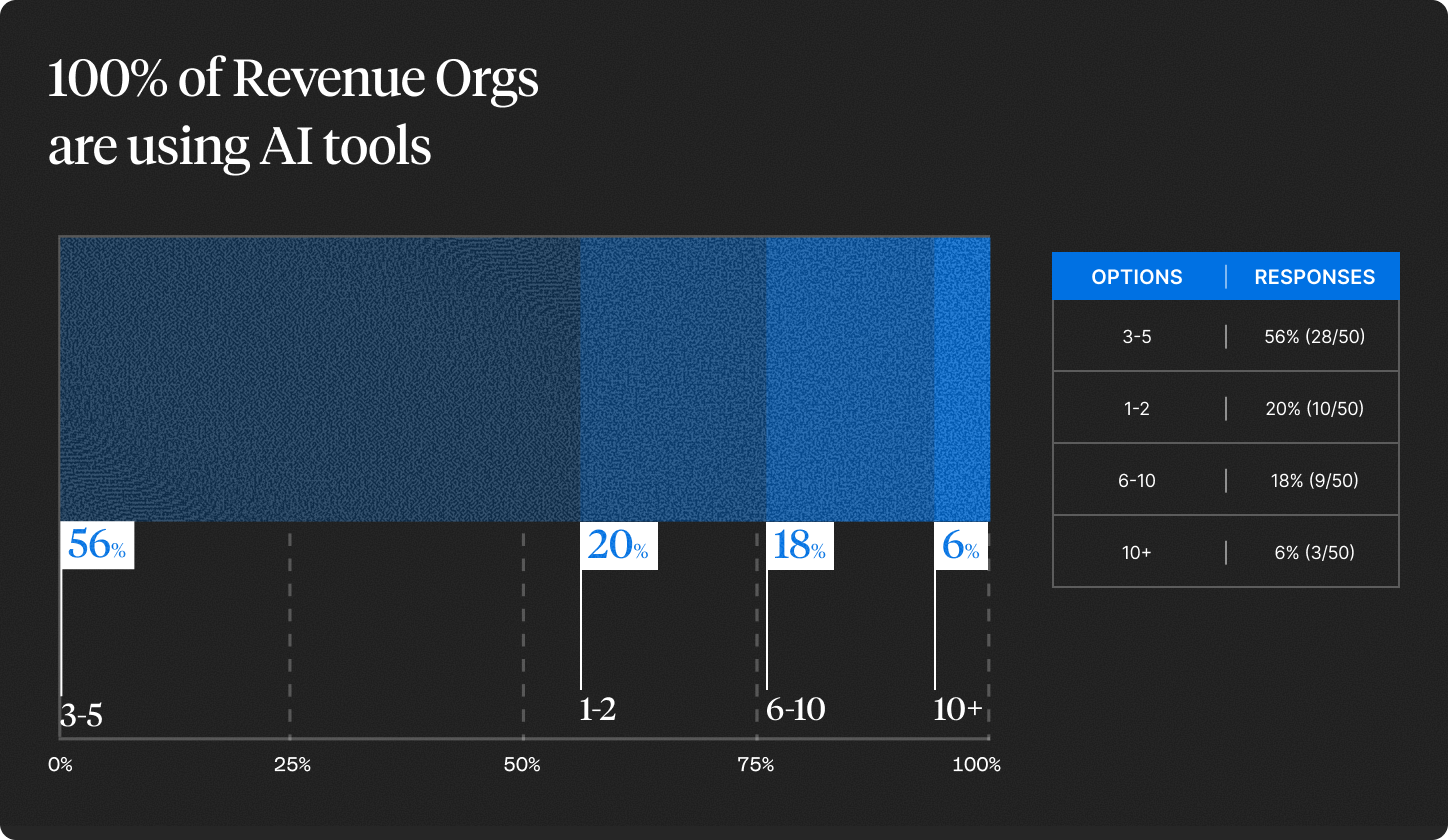

Today, revenue teams are operating on a model far away from that. 80% of revenue orgs are running three or more AI tools. 24% are running six or more. And yet the day-to-day of running a revenue team looks almost identical to what it looked like before any of it showed up.

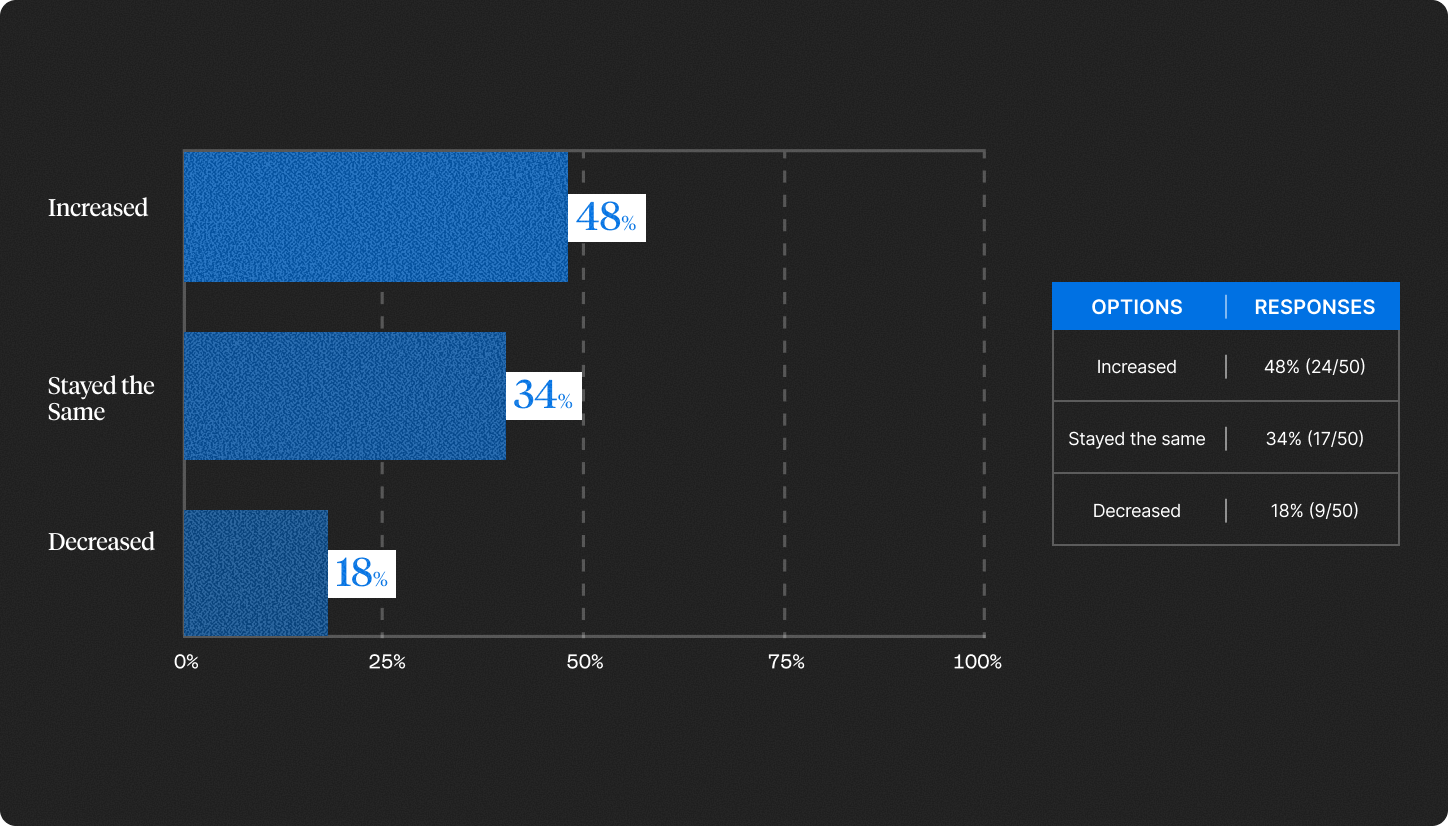

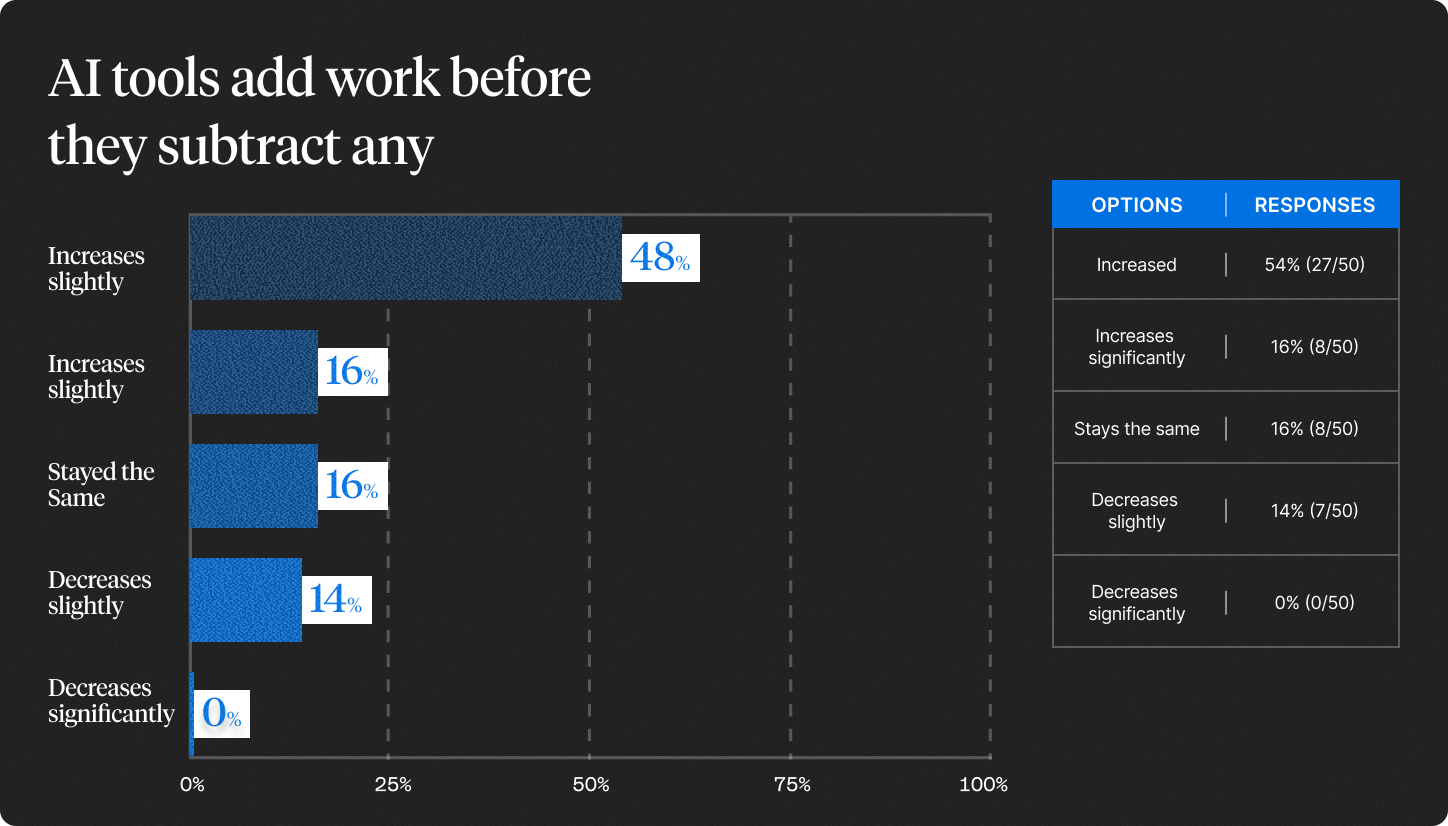

48% of leaders say their org has become more* complex since adopting AI, not less. 70% say rep workload goes *up* in the first ninety days of a new tool, not down. These are the battle stories of the leaders in the throes of adoption, frustrated by the gap between what was promised and what was delivered.

The tools are in place. The problems have not moved. That is the case for the inversion.

How revenue teams are actually using AI today

Adoption is saturated

We started this project by understanding how deep AI adoption in revenue orgs actually runs. The question was whether the AI narrative had translated into real spend, or whether it was sitting in boardroom conversations.

80% of the leaders we spoke with are running three or more AI tools across their org. 24% are running six or more. Every leader in the sample was using at least one.

The tech stacks contain what you would expect: note-takers and meeting summarizers, email drafters, enrichment layers, forecasting copilots, deal-desk assistants, coaching bots, and for a growing share, autonomous agents doing outbound or research. What used to be a single "we're piloting Gong" conversation five years ago is now a portfolio of overlapping tools, each doing a slice of the rep's day.

The work did not get lighter

If the pitch of every AI tool is increased productivity and output, the experience of most leaders has been the opposite.

82% told us their org has gotten more complex since adopting AI tools, or stayed the same. Only 18% said complexity decreased.

The leaders who saw complexity increase all described the same pattern: A new tool comes in and solves part of a workflow, but leaves the other part orphaned. The rep, or the ops team, becomes the connector between the new tool and whatever else exists in the stack.

70% of leaders said rep workload goes up after a new tool rollout.

The tool lands, the workload spikes, and doesn’t always come back down. One VP described his team as being in "permanent onboarding": the last tool never fully embedded before the next one was bought.

The cycle compounds

If complexity and workload were two separate issues, the story would be easier to fix. They aren't.

Among the leaders who told us complexity had increased, 83% also said rep workload climbs with each new tool their team adds. The two aren't parallel problems. They're the same problem, reinforcing itself every time a new piece of AI enters the stack.

The leaders furthest into the AI adoption curve are, by their own account, the ones holding the most together by hand.

And the most fundamental problems haven't moved. 62% of leaders still name "not enough pipeline" as the root cause of missed quarters, the same answer the industry has been giving for a decade. 36% say reps aren't executing the right process. Every AI tool on the market claims to solve one of these. The data says none of them have.

AI hasn't changed who runs the operation. Reps are still the operators. The tools just generate more for them to sort through.

Why the model is upside down

The adoption data in Section 1 describes the symptom. More tools, more complexity, more workload, same pipeline problems. The question is why a category this saturated is producing results this unremarkable. The answer predates AI entirely.

AI inherited the wrong model

Every piece of revenue software is built on the same premise: the human is the operator, and the software is the tool. The rep drives the CRM. The manager drives the dashboard. The human sits at the center, making the decisions, and the software exists to make them slightly more efficient at doing so.

That model was never wrong for SaaS. Salesforce, Gong, Outreach and Clari were all designed around it. AI was treated the same way.

The human still drives the workflow. AI generates a draft, suggests a next step, summarizes a call. A human reads it, judges it, and moves on. The tool got faster. The operator didn't change. You cannot pile faster tools onto a human-driven operation and expect the operation itself to transform.

The model assumes a knowledge base that doesn't exist

That model was never wrong for SaaS. Salesforce, Gong, Outreach and Clari were all designed around it. AI was treated the same way.

The human still drives the workflow. AI generates a draft, suggests a next step, summarizes a call. A human reads it, judges it, and moves on. The tool got faster. The operator didn't change. You cannot pile faster tools onto a human-driven operation and expect the operation itself to transform.

82% of the leaders we interviewed said that half or more of their organization's revenue knowledge is not documented in any system.

This is the structural reason the current model doesn't work. Every AI tool in a revenue stack is implicitly assuming the knowledge is somewhere retrievable. On every enterprise revenue team we interviewed, it isn't. It's in three or four people. The AI is operating on whatever crumbs made it into the notes.

66% of leaders said they are either not confident or would consider it a serious problem if they lost their top three reps. Only 8% said they were very confident. The org doesn't run on systems. It runs on people. And none of what those people know is accessible to a machine.

The inversion

For two decades, the human ran the operation and the software made them faster. In the inverted model, the system runs the operation and the human is the resource it calls on when it needs judgment, relationships, or a decision only a person can make. The ceiling is no longer the human. It's the quality of the system running it.

The model has to flip.

Revenue Agents for the Enterprise

What AI-as-the-brain actually requires

If the model has to flip, the system has to do four things. None of them are optional.

1. Capture what the humans know

The 82% institutional knowledge gap is the starting constraint. Before anything else, the system has to extract the patterns, context, and signals that currently live in call recordings, notes, emails, and the heads of top reps, and make them readable to a machine.

2. Learn the patterns that separate winning from losing

Once the knowledge is extracted, the system has to reason across it: which sequences of actions lead to closed business, which segments respond to which plays, which buyer signals predict a close versus a stall. This is the capability leaders were describing when they talked about the new profile of a top rep.

Pattern recognition. Today it lives in a few people.

3. Act on the patterns and route humans to what matters

This is where the current AI stack fails hardest. Today's tools generate outputs for humans to act on. A system that runs the operation has to take actions itself: prioritizing accounts, initiating outbound, researching deals, escalating to humans only for the work that requires judgment or relationship.

Leaders named this directly:

What leaders have instead is AI that drafts emails when asked and formats meeting notes after the fact. The system does what the human tells it to. Nobody has a system that decides what needs to happen next.

4. Get better from every action and every override

Every action the system takes produces a result. Every time a human overrides it, that override is a signal. A real operating layer turns both into training data and improves. The longer it runs, the more specific it becomes to how this revenue org wins.

None of the four capabilities are theoretical. Each one maps to something top performers already do instinctively. The question is whether the next generation of revenue software will do them at the scale of the whole org.

A system that does these four things together is a Revenue Agent.

What changes for the revenue leader

In a Revenue Agent world, the rep profile changes, what a rep is for changes, and the math the CRO uses to plan the team changes.

The rep profile has already shifted

The old definition of a top rep is persistence, charisma, and a book of relationships. That is not what leaders describe today.

The profile emerging from the data is a rep who succeeds in a system that's doing execution around them.

The human becomes the judgment layer

70% of leaders said the primary value of a human rep, as AI handles more execution, is “all of the above”: relationship management, strategic judgment in complex deals, and creative problem-solving together, not any one of them in isolation. Individually, those three categories scored 46%, 42%, and 40%.

In a Revenue Agent world, the system runs the plays. The rep approves, overrides, and redirects. What leaders are describing in that 70% is a role that widens across human work, not one that specializes into a single task.

The productivity math is rising, but nobody agrees on what it is

Leaders expect output per rep to climb as Revenue Agents take on more execution. They agree on the direction. They do not agree on the magnitude.

The median leader expects an additional $150,000 in revenue per person over the next two years. The range runs from $10,000 to roughly $1 million. Some of that spread is company size and deal value. But not all of it.

The leaders at the top of the range are planning for a world where AI runs the operation and every human action is higher leverage. The leaders at the bottom are planning for incremental productivity gains from better tools. Both are in the same market. Only one of them is planning for what comes next.

What a CRO has to reckon with

- Hiring moves first, because it takes the longest to compound. The CROs pulling ahead will be the ones who hire for the rep who holds a relationship and operates inside a running system, before the rest of the market agrees on the profile.

- Management shifts from inspection to coaching. When a Revenue Agent is handling execution, the moments flagged for a human become the manager's job: the stalled deal, the complex negotiation, the relationship repair.

- Productivity math has to be rebuilt. The models most CROs use to forecast AI's impact were built for an AI-as-tool world. In a Revenue Agent world, the math has different inputs and a different ceiling. The CROs who rebuild first have a compounding advantage

The thesis of this report is that the model of revenue software that defined the last two decades of SaaS, in which humans hold the knowledge and software makes them faster at using it, has reached the limit of what it can produce.

The next phase of revenue software is not more AI on top of the existing model. It is a different model, in which the system holds the knowledge, runs the operation, and pulls humans in for the work that requires them. That system is what this report calls a Revenue Agent.

Three priorities follow from the data, in order.

First, capture institutional knowledge before bolting AI on. The 82% finding is the single most important number in this report. If your best sellers walked out the door this quarter, the org would not be able to rebuild what they know.

Second, figure out your best plays and where human judgment has to live. Flipping the model does not remove humans. It redeploys them to the moments that require relationship, judgment, or context the system cannot hold.

Third, rebuild the productivity math. The leaders forecasting incremental lift from AI tooling are planning for a different decade than the ones forecasting a million dollars per rep. Only one of them is planning for a Revenue Agent world.

First, capture institutional knowledge before bolting AI on. The 82% finding is the single most important number in this report. If your best sellers walked out the door this quarter, the org would not be able to rebuild what they know.

Second, figure out your best plays and where human judgment has to live. Flipping the model does not remove humans. It redeploys them to the moments that require relationship, judgment, or context the system cannot hold.

Third, rebuild the productivity math. The leaders forecasting incremental lift from AI tooling are planning for a different decade than the ones forecasting a million dollars per rep. Only one of them is planning for a Revenue Agent world.

The state of Revenue Agents today is that they don't yet exist at scale. The state of the industry is that they have to.

%20(1).png)