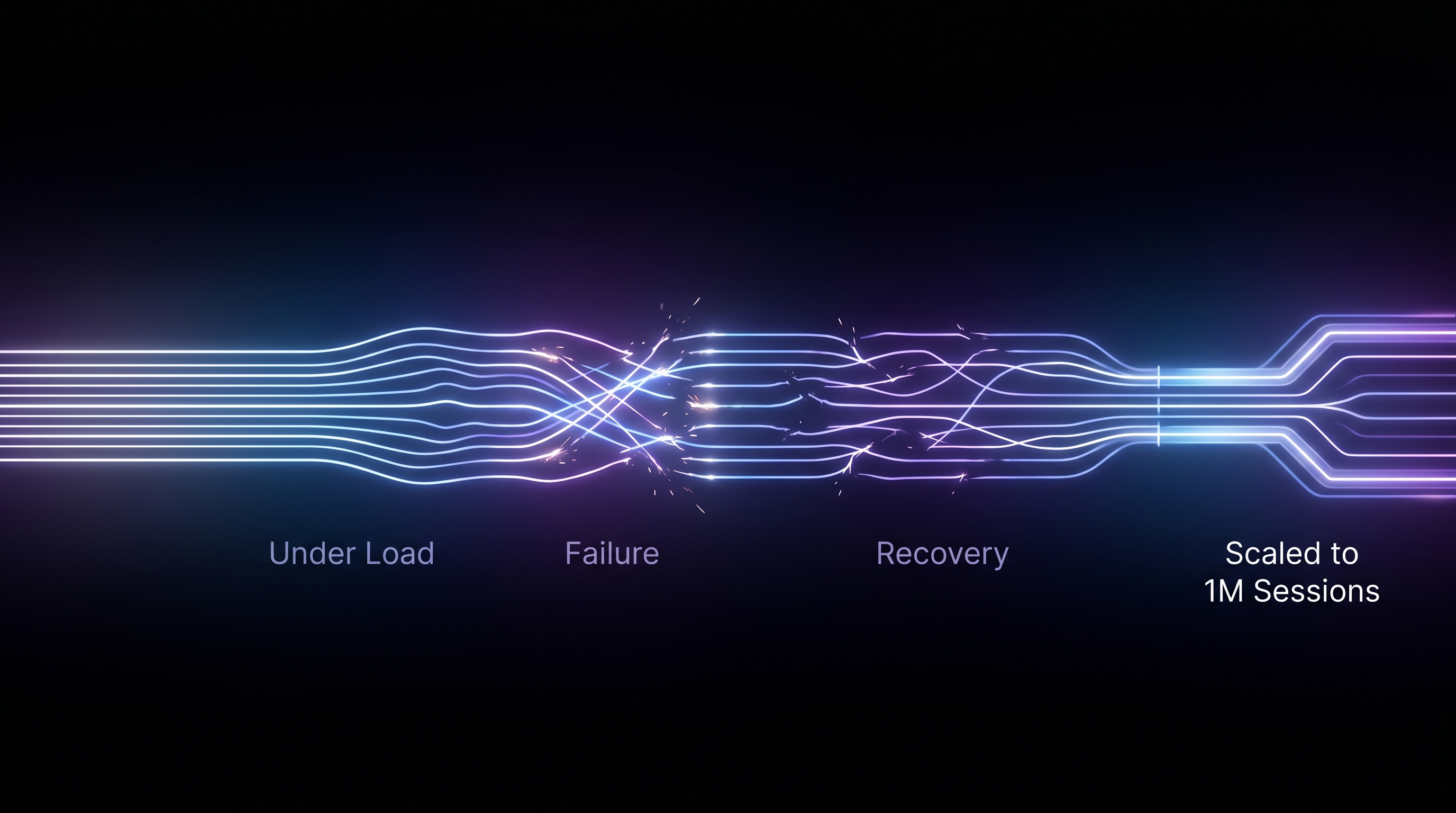

We Broke Every Part of Our Coding Agent Infrastructure on the Way to 1M Sessions a Day

Our agents couldn't do math. They could summarize a Gong call, extract themes from email threads, spot when a champion went quiet. But ask "which of my 200 deals had the largest drop in stakeholder engagement this quarter?" and the agent would hallucinate a ranking. It would confidently say Deal A dropped 34% when the real number was 12%. LLMs can't count, and they can't join three datasets and aggregate across nested fields.

So we gave them a code interpreter. A Python sandbox where the agent writes real code, executes it, and reasons from the output. That decision, made in about an afternoon, took eight months of production incidents to get right.

We now run roughly 1 million sandbox sessions per day across our customer base. Every sales intelligence agent in HockeyStack, the ones that generate tasks, score deals, brief reps, and detect buying signals, can drop into a Python sandbox when it needs to compute something. This is the story of everything that broke along the way.

V1: the afternoon prototype

The first version was simple. We spun up an E2B sandbox, gave the agent an execute_python tool, uploaded customer datasets as JSON files, and let it go. The agent would write Python, the sandbox would run it, and the result would feed back into the conversation. When done, the agent would save a markdown file and we'd download it.

It worked on the demo. Three datasets, 50 records total, clean data. The agent loaded everything, wrote a few pandas operations, produced a summary. We shipped it.

Problem 1: OOM at 200 accounts

The first real customer ran it against 4,200 deal records with nested Gong transcripts. Each transcript averaged 40KB of text. The agent's first move was deals = json.load(open('deals.json')), which loaded 168MB into sandbox memory. Then it loaded email threads (another 90MB), then stakeholder data. Peak memory hit 1.8GB. The sandbox had 2GB allocated. Python's JSON parser fragmented what was left, and the next pandas.DataFrame(deals) call OOM'd.

We tried bumping the sandbox to 4GB. That fixed one customer and broke the next one who had 8,000 accounts. We were playing whack-a-mole with memory limits.

The fix was to stop uploading raw data. Instead, we pre-process datasets on our side before the sandbox starts. The computeDatasetStructure function walks each dataset recursively and produces a type description:

emails: 47 records. Structure of each record: object {4 keys:

thread_id: string ("th_8f3a...")

participants: array of 3 x object {2 keys: name, email}

messages: array of 12 x object {4 keys: sender, body, timestamp, attachments}

summary: string (340 chars, preview: "Discussion about Q2 pipeline...")

}That description goes into the system prompt. The agent knows the shape of every dataset before it writes a single line of code. For the actual data, we still upload JSON files, but we cap them. If a dataset exceeds the memory budget, the upstream pipeline pre-aggregates or samples it before the sandbox session starts. The sandbox stays at 2GB. It hasn't OOM'd in four months.

Problem 2: 40% of turns wasted on exploration

Even with structure descriptions, agents were burning turns. A 30-turn budget sounds generous until you watch an agent spend 12 turns on boilerplate: load file, print keys, print first record, check types, print length, check nested types. By the time it started actual analysis, it had 18 turns left. For complex multi-dataset analysis, that wasn't enough.

We attacked this from two angles.

First, we built a load_data.py helper that the agent runs with one line: exec(open('/home/user/load_data.py').read()). It loads every dataset into a named Python variable and prints a summary. The agent's first turn goes from "figure out what files exist" to "all data is loaded, start analyzing."

Second, we added explicit turn budget guidance to the system prompt: "You have 30 tool-use turns. Do NOT waste turns on inspecting dataset structure (it is fully described above), printing entire datasets, or exploratory type-checking." Sounds obvious. But without it, every model we tested (Claude, GPT-4, Cerebras) would default to cautious exploration. The prompt override cut exploration turns from 12 to zero. Average task completion dropped from 4 minutes 20 seconds to 2 minutes 10 seconds.

The numbers at scale: at 1M sessions/day, saving 2 minutes per session is 34,000 compute-hours per day. At E2B's per-second pricing, that's real money.

Problem 3: hallucinated results survived the code interpreter

We expected the code interpreter to eliminate hallucination. It didn't. What happened instead was subtler: agents would write correct Python that operated on wrong assumptions.

The worst case was temporal reasoning. A Gong transcript from March 20 says "let's follow up in two days." The agent would compute today + timedelta(days=2) instead of datetime(2026, 3, 20) + timedelta(days=2). The resulting due date would be two days from whenever the agent ran, which could be weeks after the actual call. We had tasks showing up with due dates in the past and nobody understood why until we traced it to this class of error.

Agents also hallucinated join keys. Given a deals dataset and an emails dataset, the agent would assume both had a company_id field and write a join. Sometimes the email dataset used account_domain instead. The code would run, produce an empty DataFrame, and the agent would conclude "no email activity found" instead of realizing the join failed.

We fixed temporal reasoning with explicit anchoring rules in the system prompt. Concrete examples, not vague guidance:

A call on Monday Jan 5 where someone says "let's follow up in two days"

→ they mean Wednesday Jan 7, NOT today + 2 days.

An email from Friday March 13 saying "I'll get back to you next week"

→ they mean the week of March 16, NOT next week from today.

We added a hard rule: "Every date and weekday in your output MUST be verified with Python's datetime module. Do NOT compute day-of-week in your head." After adding that, temporal errors dropped from 23% of sessions to under 2%.

For join key errors, we pre-describe the relationships between datasets in the system prompt and instruct the agent to build lookup dictionaries by ID before iterating. This one we're still improving. About 4% of sessions still produce empty results from failed joins on edge-case schemas.

Problem 4: the agent needs API access but can't be trusted with API keys

The coding agent needs to talk to external services. It needs to call Claude for reasoning, fetch enrichment data, hit search APIs. Our first instinct was to pass API keys as environment variables into the sandbox. That lasted about a week. An agent wrote import os; print(os.environ) as a debugging step. Our Anthropic API key appeared in the tool output, went into the message history, and got logged. A different agent tried to curl an external endpoint directly using a key it found in its environment.

The fix wasn't better secret hiding. It was removing direct access entirely.

We built a gateway API on the HockeyStack side. The sandbox doesn't get API keys to Claude, or to any external service. Instead, before each session starts, we generate a short-lived session token scoped to that specific sandbox run. The token gets stored in MongoDB with the session ID, tenant ID, agent ID, and an explicit list of allowed operations. It's hashed with SHA-256 before storage so even a database leak doesn't expose raw tokens.

The coding agent can only call one endpoint: the HockeyStack API. Every request goes through our gateway with the session token in the header. The gateway validates the token, checks that the requested operation is in the token's scope, and if it passes, proxies the request to the actual external service using our own keys. The agent never sees a Claude API key, a search API key, or any other credential. It sees its session token and our API URL.

The scopes are deliberately narrow. A task generation agent might get: llm:chat, search:web, data:read. It doesn't get data:write or crm:push because those operations happen in a different workflow step. When an agent tries to call an operation outside its scope, a deterministic check on the API side rejects it before the request ever reaches an external service. We log these rejections. They happen more often than you'd expect. Agents regularly try to do things they weren't asked to do: writing to Salesforce when they're supposed to be analyzing data, calling enrichment APIs when they already have the data locally, making LLM calls with different model parameters than what we specified.

The rejection rate tells us something about the gap between what we intend agents to do and what they actually attempt. About 8% of sessions trigger at least one scope rejection. The agent usually recovers, falls back to working with what it has, and produces valid output anyway. The scope boundary acts as a forcing function: the agent can't go on fishing expeditions because the gateway won't let it.

Token lifecycle is simple. Generated before sandbox creation, active for the duration of the session (max 5 minutes), automatically invalidated when the sandbox is killed. If a session crashes without cleanup, a TTL index on the MongoDB collection expires the token after 10 minutes. There's no way to reuse a token from a previous session. There's no way to escalate scope after creation.

Problem 5: 15% of runs producing no output

At 30 max turns, about 15% of sessions would exhaust their budget without saving a result file. The agent would get deep into analysis, realize it needed a different approach, restart, and run out of time. We'd download nothing from the sandbox.

The fix was two injection points near the end of the turn budget. At 3 turns remaining: "You have only 3 iterations remaining. Write your final result NOW." At 1 turn remaining: "FINAL WARNING: This is your LAST iteration. Write to result.md RIGHT NOW or the task will fail."

These aren't gentle suggestions. They're injected as user messages into the conversation, overriding whatever the agent was doing. After adding them, output failure rate dropped from 15% to 2.7%. The remaining 2.7% are almost always unusually large datasets where 30 turns genuinely isn't enough. Those get retried with a larger allocation.

For the generic runner variant (the one using Cerebras instead of Claude Code), we set the ceiling at 200 iterations. Same injection pattern at 197 and 199. The higher ceiling means Cerebras sessions almost never hit the limit, but when they do, the same recovery mechanism kicks in.

Problem 6: two runtime variants for different workloads

We started with one execution path: Claude Code CLI running inside E2B with --print --output-format stream-json. It works well for complex analysis that requires domain reasoning, reading skill files, understanding sales processes. But it's slow (average 2m 10s) and expensive (Claude API costs per session).

For pure data crunching, computational analysis without domain judgment, we built a second path. A generic LLM-to-sandbox loop using Cerebras as the provider with temperature: 0.2 and reasoningEffort: 'high'. The agent gets only the execute_python tool. No skill files, no domain knowledge, just "here's data, here's a question, write Python." Cerebras sessions average 48 seconds and cost roughly a fifth of the Claude Code path.

The routing is decided by the workflow step definition. Steps that need domain knowledge use the Claude Code template. Steps that need computation use the generic runner. Most workflows use both: a Claude Code step to detect signals and reason about them, then a Cerebras step to compute metrics and validate.

What 1M sessions/day looks like

The system runs on E2B's infrastructure with our custom hs-claude-code template: Node.js 24, Python 3, Claude Code CLI, ripgrep, 2 CPU cores, 2GB RAM per sandbox. Each session gets fresh compute. Nothing is shared between sessions or between tenants.

At current volume:

The 8.3 average turns number is what we're proudest of. It means the pre-computation and prompt engineering work actually landed. Agents go straight to analysis, compute what they need, save the result, and stop. The median is 6 turns. The distribution has a long tail from multi-dataset analysis that requires iterative exploration, but even those rarely exceed 20.

The architecture we ended up with

Every piece of this system exists because something broke in production:

- E2B disposable sandboxes exist because agents can't be trusted with persistent compute.

- Pre-computed dataset structure exists because agents waste turns exploring what they already know.

- Turn budget injections exist because agents don't know when to stop.

- Temporal anchoring rules exist because LLMs can't reason about relative dates.

- Gateway API with scoped session tokens exists because agents will try to call services they shouldn't, print credentials they find, and escalate their own access if you let them.

- Two runtime variants exist because one model can't be the best at both reasoning and crunching.

- Output truncation at 4,000 chars exists because one agent's stdout inflated the context window to 400K tokens.

None of these were in the original design. Every one was a production incident that became a feature. That's probably the most honest thing we can say about building coding agent infrastructure at scale: the architecture is a fossil record of failures.