We Made Our AI Agents Cost-Aware and They Got Better at Their Jobs

We run AI agents that autonomously research companies, enrich contacts, generate emails, and push data into CRMs. These agents execute multi-step workflows across dozens of tools, hitting third-party APIs on every pass. Let an agent loop over a thousand companies with online_research set to high complexity, and you've committed to a real bill.

We found out the hard way. A prospecting agent burned through hundreds of enrichment calls in a single run, pulling phone numbers, personal emails, technographics, and job postings for companies that had already churned. Every call hits an external API that costs real money, and the agent had no idea.

Six months ago, we would have solved this with guardrails. Hard caps on API calls, deterministic tool selection logic, if/else chains deciding when an agent is "allowed" to enrich a phone number. That was the playbook before frontier models got genuinely good at reasoning. You built tight, deterministic workflows because the model couldn't be trusted to make judgment calls.

The obvious fix was rate limiting. Cap the calls, throttle the loops, set a hard budget. We tried that first. The agents got worse. They'd hit their ceiling halfway through a batch, skip the highest-value companies, and produce incomplete results that needed manual cleanup. Rate limits turned capable agents into broken ones.

So we tried treating the agent more like a new employee with a company card. We stopped limiting what agents could spend and started telling them what things cost. Once we shifted from trying to control the agent to giving it enough context to make good decisions, the results spoke for themselves.

The Rate Card

We wrote a price list for every operation an agent can perform:

FIRST PARTY (our data)

list_records: 1 credit/call

get_gong_calls: 1 credit/record

THIRD PARTY (external enrichment)

online_research (low): 1 credit/record "Have they raised funding?"

online_research (medium): 2 credits/record "What are their strategic priorities?"

online_research (high): 5 credits/record "Analyze their clinical trials and competitors"

contact profile: 2 credits/record

work email: 2 credits/record

personal email: 4 credits/record

phone number: 10 credits/record

GENERATIVE

synthesize_analysis: 10 credits/call

generate_email: 3 credits/record

ACTIONS

slack message: 2 credits/call

CRM update: 2 credits/record

send email: 2 credits/record

The pricing mirrors our actual costs. Phone numbers are 10 credits because phone data is genuinely expensive to source and verify. Personal emails cost double work emails because they require more aggressive enrichment pipelines. Internal data lookups are cheap because the data is already ours. The rate card is a map of the underlying data market.

We injected this into the agent's system prompt. The agent sees the full price list before planning its approach. It knows a phone lookup costs 5x a profile lookup. It knows deep research costs 5x a simple yes/no question.

We didn't add enforcement or budget caps. The agent sees the prices. That's it.

Then we watched what happened.

The Setup

We ran cost-aware and cost-blind agents side by side on identical workloads for four weeks. 12,000 total agent runs across the same target companies and enrichment goals. The only variable was whether the agent could see the rate card.

Phone Lookups Dropped 68%

Cost-blind agents pulled phone numbers for every contact by default. It was just another enrichment field, no signal telling them it was any different from grabbing a job title.

Cost-aware agents treated phone as a last resort. They'd enrich the profile and work email first, check whether the contact had an existing communication thread in our system, and only pull the phone number when the task specifically called for a cold call sequence.

The phone lookups that survived were higher quality. The agents were targeting contacts where phone outreach was the clear best channel, instead of enriching every record identically. We checked this by looking at downstream task completion rates for phone-enriched contacts: the cost-aware agents' phone pulls led to 23% more completed outreach sequences, because they were only spending 10 credits when the 10 credits actually mattered.

Research Complexity Self-Calibrated

This was the most surprising result. Cost-blind agents defaulted to high-complexity research for everything. Why wouldn't they? From the agent’s perspective, it’s the dominant strategy: more research often produces a better answer, and it never produces a worse one. Without a cost signal, there’s simply no reason to choose otherwise.

Cost-aware agents started matching research depth to the actual question. "Does this company use Kubernetes?" became a low-complexity lookup (1 credit) instead of a deep competitive analysis (5 credits). "What's driving their expansion into APAC?" stayed high-complexity, because the agent recognized the question genuinely required multi-source synthesis.

We tagged each research call with its complexity tier and compared the distributions:

Cost-blind Cost-aware

Low complexity 12% 41%

Medium complexity 34% 38%

High complexity 54% 21%

The total amount of research was roughly the same. The distribution shifted dramatically. The agents weren't doing less work. They were scoping their work to match the question.

This matters because high-complexity research calls fan out to multiple web scraping APIs, take longer to return, and produce more noise in the results. For a simple binary question ("Do they have a SOC 2 certification?"), a 5-credit deep dive produces the same answer as a 1-credit lookup, just slower and noisier. The cost signal helped agents match tool to task.

Agents Started Batching

We didn't design for this one. When agents could see that each online_research call costs credits per record, they began consolidating related questions into single research passes.

An agent researching a company's tech stack and hiring activity would previously make two separate calls. With cost visibility, it combined them: "What technologies do they use and are they actively hiring in engineering?" One medium-complexity query instead of two. The answers were equivalent. Credit consumption dropped ~30% for multi-question research tasks.

The mechanism is straightforward. The agent can see that two 2-credit calls cost 4 credits, and one 2-credit call with a broader question costs 2 credits. It makes the obvious choice. What's interesting is that without the cost signal, no agent ever batched. The default behavior is one question per call, because there's no incentive to optimize.

Output Quality Went Up

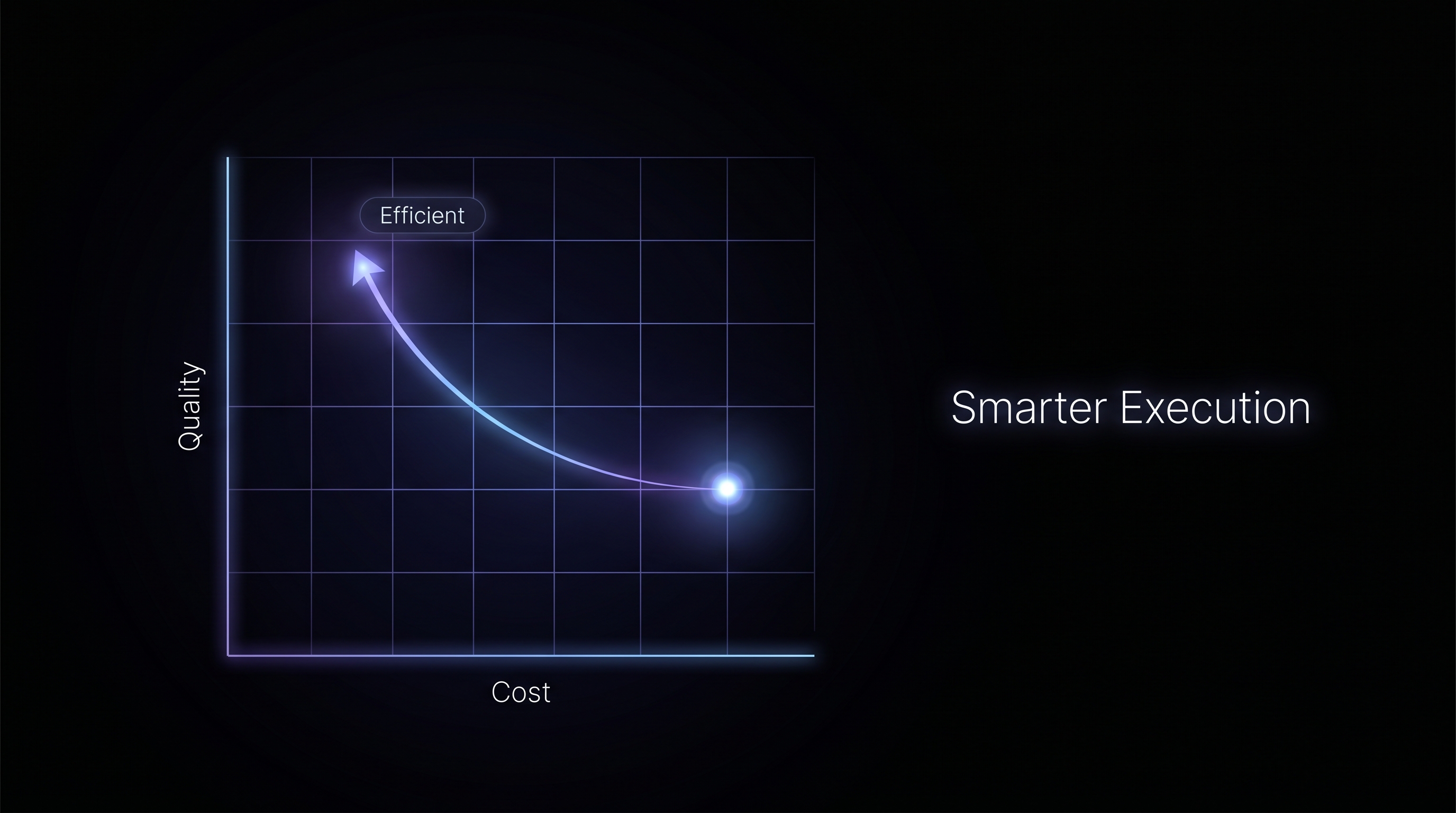

We score agent outputs on a rubric: relevance, completeness, and actionability, each rated 1-10. Cost-aware agents scored 8.2 average versus 7.2 for cost-blind. A 14% improvement.

Our best explanation: the cost signal functions as a forcing function for relevance. When the agent has to weigh whether a 10-credit phone lookup actually serves the task goal, it ends up thinking harder about the task goal itself. Is this contact actually reachable by phone? Is phone the right channel for this persona? These are questions the agent should always ask, but without a cost signal, there's no trigger to ask them.

We saw the same pattern with synthesize_analysis (10 credits/call). Cost-blind agents would generate synthesis reports for every batch regardless of whether downstream tasks needed them. Cost-aware agents only synthesized when the output fed into something concrete, like a meeting prep brief or a deal summary. Fewer reports, but each report was more focused.

Behaviors We Didn't Anticipate

Agents occasionally upgraded their research for high-stakes accounts. This was the result that convinced us the system worked. For large enterprise accounts in active deal cycles, cost-aware agents chose high-complexity research even when medium would have sufficed. The output was feeding into a meeting prep brief for a $200K deal. Five credits is nothing relative to the value of a well-prepared enterprise meeting.

Agents started narrating their cost reasoning. Unprompted. In the reasoning traces, we'd see things like: "Profile enrichment (2 credits) sufficient here. Work email likely available from existing CRM record, skipping 2-credit email lookup." The rate card gave agents a vocabulary for trade-off reasoning they didn't have before. Every decision that was previously implicit became explicit and auditable.

Agents preferred internal data over external enrichment. If a company's employee count was available in our get_employee_count tool (free) and also via online_research (1-2 credits), cost-aware agents checked internal data first and only fell back to external research when internal data was stale or missing. Cost-blind agents hit the external API 100% of the time. No preference, no reason to have one.

The agents were making ROI calculations. That's what cost awareness actually is: the ability to weigh spend against expected value. Cheap when cheap is appropriate. Expensive when the situation demands it.

What We Tried That Didn't Work

Inflated pricing. Early on, we tried setting phone lookups to 50 credits (5x actual cost) to discourage overuse. The agents avoided phone entirely. Every contact record came back under-enriched. Outreach sequences that genuinely needed phone data failed because the agent wouldn't pay 50 credits for anything.

When we set the price to 10 (the real cost), agents used phone lookups sparingly and appropriately. The rate card only works as a signal because it maps to reality. Inflate it and you've just invented a different kind of rate limit, one that distorts behavior in ways you can't predict.

Per-tool costs without total visibility. When we only showed individual tool prices, agents optimized locally. They'd pick the cheapest tool at each step. When we added projected total cost for the full workflow, they started optimizing globally. Sometimes that meant choosing a more expensive tool early to avoid three cheap ones later. The shift from local to global optimization was significant.

No escalation for "free" tool chains. We price internal data lookups at zero credits. They're our data, no external cost. But we noticed agents chaining five or six internal tools together reflexively, calling every available data source "just in case." The orchestration cost is real even when the API cost is zero: more tool calls means more tokens, more latency, more noise in the context window.

We added an escalation rule: 3+ free tools in one operation gets flagged as medium effort (1 credit). This reduced unnecessary tool chaining by 22% without affecting output quality. The agents stopped grabbing everything and started grabbing what they needed.

The Numbers

Four weeks, 12,000 agent runs, side by side:

At $0.08 per credit, a customer running 200 agents daily saves roughly $28K per year from the credit reduction alone. The quality improvement means less human review on top of that.

The Bigger Shift

A year ago, we built agent workflows like assembly lines. Every decision point was an if/else branch we wrote. Every tool call was gated by deterministic logic. The agent was an executor, not a decision-maker. You had to build it that way, because the models weren't reliable enough to delegate real judgment to.

That changed somewhere around Opus 4.5 and the models that followed. The reasoning got good enough that the bottleneck flipped. The constraint stopped being "can the model make good decisions?" and became "are we giving it enough context to make good decisions?"

Rate limiting is a deterministic solution to a judgment problem. It says "you can't spend more than X" instead of "here's what things cost, figure it out." The first approach scales with engineering effort (every new tool needs a new limit). The second scales with model capability (better models make better trade-offs automatically).

Every team building production agents will hit this cost problem. The instinct is to add more guardrails, more limits, more approval gates. We tried all of those. They made the agents worse.

What worked was treating the agent like an adult employee with a company card and an expense report. Give it the price list. Let it plan. Review the receipts after.

The 42% cost reduction is real. The 14% quality improvement is the part worth paying attention to. When you stop micromanaging agents and start informing them, the work gets better across the board. The cost signal was a forcing function, but the underlying lesson is broader: as models get smarter, delegating more reasoning into the model produces better results than constraining it from the outside.